New Leadership Challenges with AI Facing U.S. Companies

According to McKinsey's 2025 State of AI survey, 78% of organizations now use AI in at least one function, yet nearly two-thirds have not begun scaling it.

The tools are everywhere, but the structure to support them is missing.

In practice, that gap appears quickly.

Your marketing team is using one AI tool for campaign copy. Sales has signed up for AI-powered lead scoring. Customer support deploys a chatbot without telling IT. Meanwhile, your CEO wants a report on AI’s productivity impact, but no one can agree on what to measure.

What follows are the five leadership challenges that emerge when AI adoption outpaces coordination.

TL;DR: AI adoption isn't a technology problem anymore — it's a leadership and coordination problem. Companies that succeed build clear governance, structured workflows, transparent communication, and unified systems around AI rather than letting tools proliferate without oversight.

Why AI is creating new leadership pressures

A separate McKinsey workplace report highlights the scale of the disconnect. Almost all companies are investing in AI, but only 1% believe they have reached maturity. AI maturity refers to how effectively an organization integrates AI into core workflows, decision-making, and measurable business outcomes beyond isolated experimentation.

At the same time, employees are using AI in their daily work far more than executives expect. Adoption is happening organically across teams, often without coordination or oversight.

This gap between widespread use and limited structure is what creates the leadership challenges that follow.

Challenge 1: Managing human-AI collaboration

The question most teams haven't answered is deceptively simple: who is responsible for what?

Define clear roles between people and AI

AI can accelerate tasks, but it should never remove human oversight. The most effective organizations establish clear boundaries:

|

Function |

What AI handles |

What humans own |

Where oversight lives |

|

Content creation |

Drafts, outlines, variations, and first passes |

Strategy, tone, brand alignment, and final approval |

Marketing lead reviews all published content |

|

Customer support |

Routine inquiries, ticket routing, suggested responses |

Complex issues, escalations, relationship decisions |

Support manager monitors AI accuracy weekly |

|

Data analysis |

Pattern recognition, trend identification, report generation |

Interpretation, context, strategic recommendations |

Department head validates before action is taken |

|

Sales outreach |

Lead scoring, email personalization, follow-up sequencing |

Relationship judgment, deal negotiation, account strategy |

Sales manager reviews AI-prioritized pipeline |

|

HR and recruiting |

Resume screening, scheduling, FAQ responses |

Candidate evaluation, culture fit, offer decisions |

HR lead audits screening criteria quarterly |

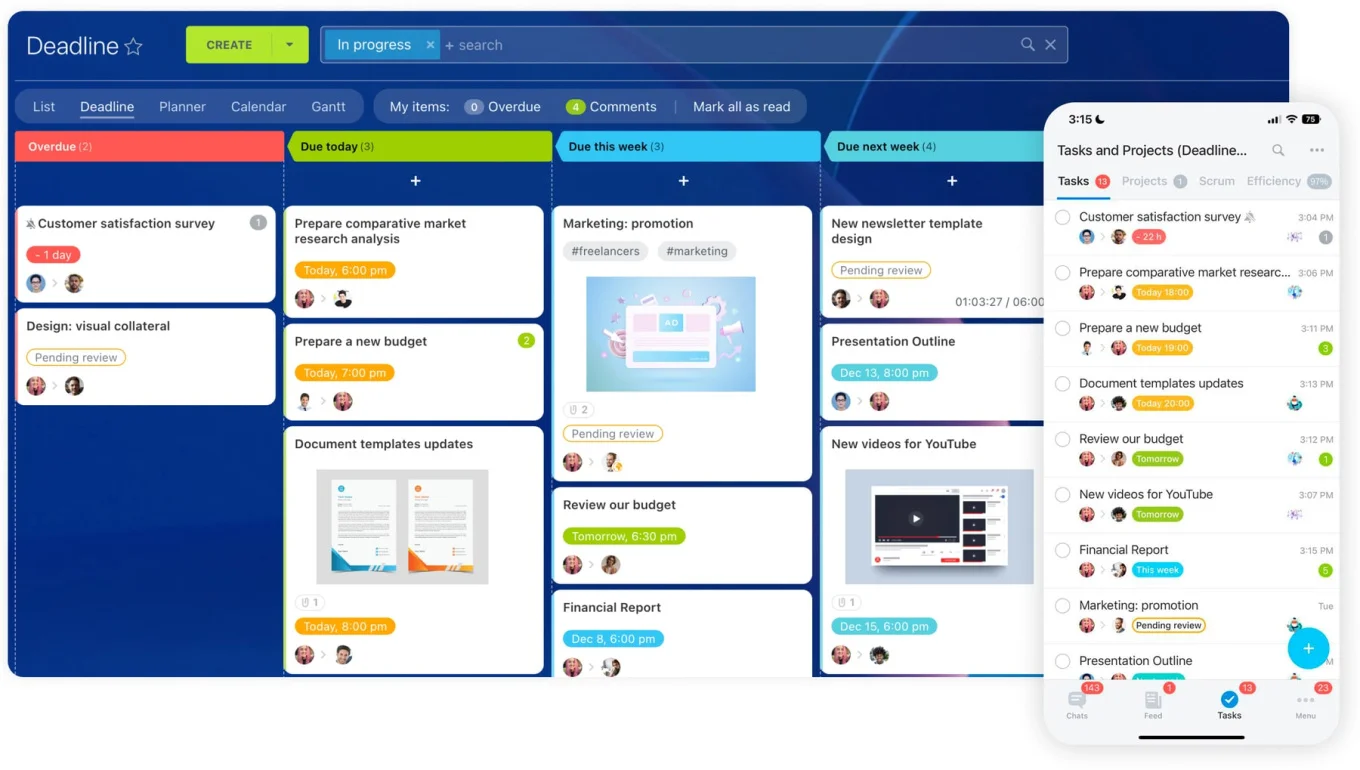

Build structured workflows around AI outputs

Human-AI collaboration works best when processes are defined. Leaders need systems that:

- Track AI-assisted tasks and projects alongside manual work

- Assign responsibility for review and approval at each stage

- Maintain accountability for final outcomes

Protect human judgment

Even advanced AI cannot replace experience, context, or ethical reasoning. Encourage employees to question AI-generated outputs, validate insights against domain knowledge, and apply critical thinking before acting on recommendations.

For a deeper look at where this balance matters most, see balancing automation and the human touch.

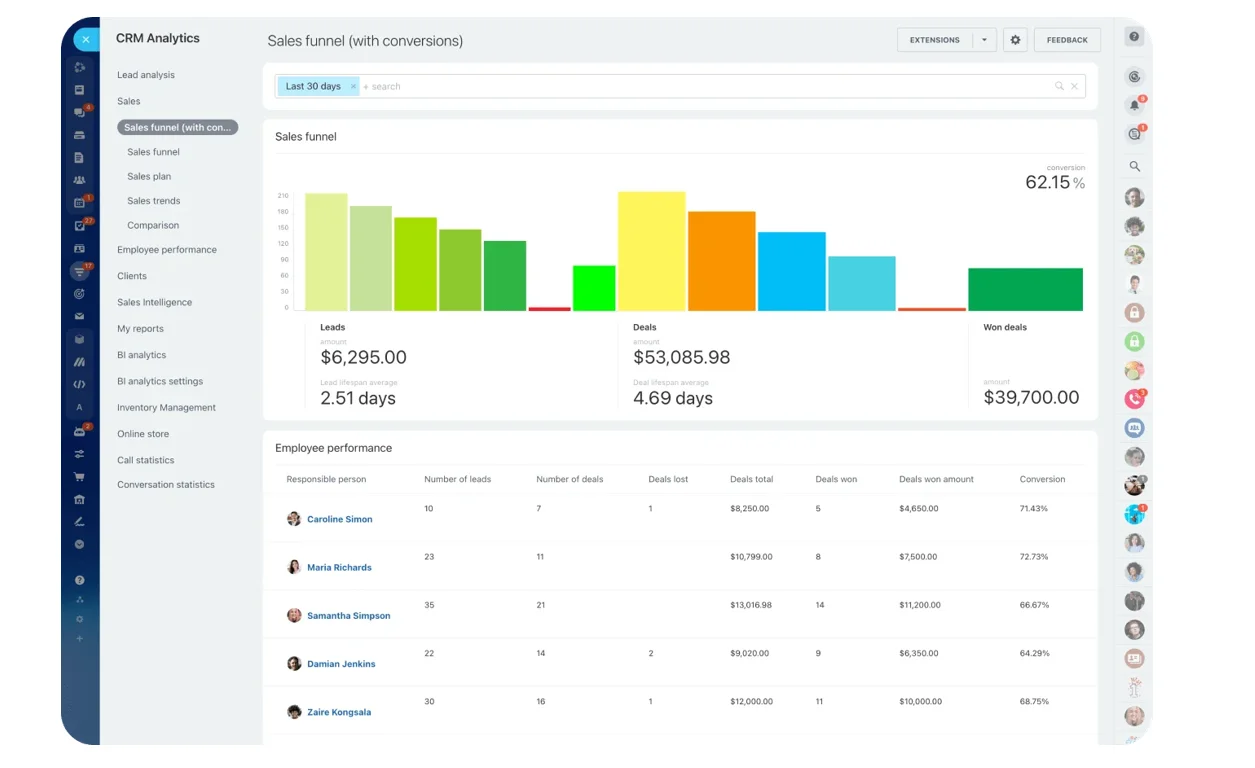

Top tip: Measure decisions, not just output

Most teams track AI usage or time saved, but that misses the real impact. Instead, focus on decision quality and speed. Are teams making better calls, faster, with clearer reasoning? That’s where AI creates leverage.

AI Leadership Readiness Checklist: What US Teams Must Fix Now

Enter your email address to get a comprehensive, step-by-step guide

Challenge 2: Maintaining employee trust

AI adoption brings excitement, certainly. But with it often comes quiet anxiety, too. Many employees privately wonder how these changes will affect their roles. If those concerns go unaddressed, trust erodes and adoption stalls.

Address concerns before they become resistance

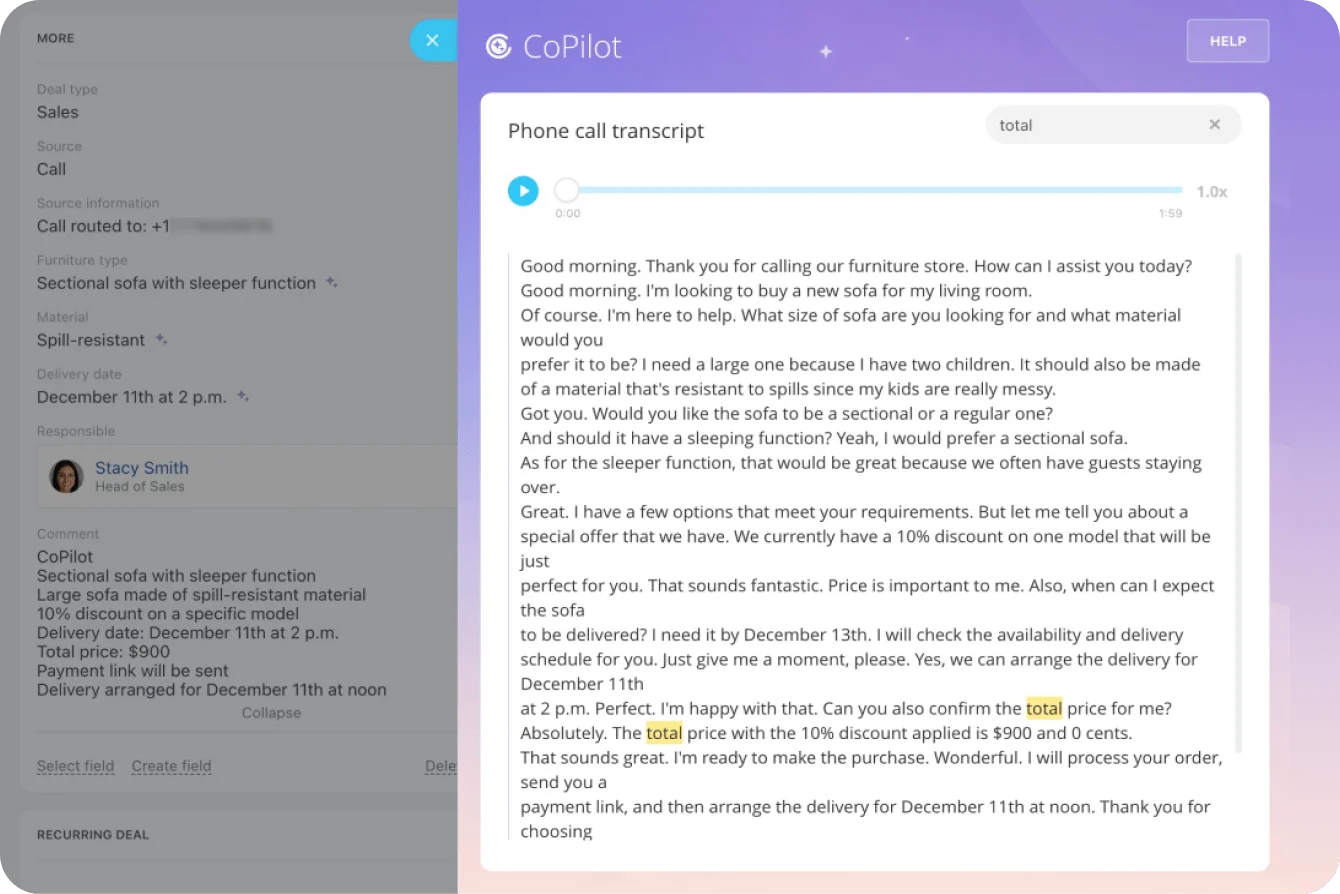

The most common fear is job displacement. Clear communication reduces this uncertainty: explain why AI tools (like Bitrix24's CoPilot) are being introduced, which tasks they'll handle, and how they support rather than replace the team's work.

Create learning pathways

AI adoption creates opportunities for professional growth. Support the transition by:

- Encouraging skills in AI collaboration and data literacy

- Running internal workshops and knowledge-sharing sessions

- Documenting how AI tools are used so new employees can onboard into AI-assisted workflows

Keep information flowing

Trust grows when employees feel informed. Regular updates about AI initiatives — what's being tested, what's working, what's changing — prevent confusion.

A shared workspace where teams see project updates and initiative timelines gives employees visibility into how AI is being integrated, not just that it's happening.

Challenge 3: Ensuring responsible and ethical AI use

As AI systems become more powerful, they raise questions about responsibility that most organizations haven't formalized.

Understand the risks:

- Bias in data → affects hiring decisions, customer recommendations, pricing models

- Privacy violations → customer data processed without proper consent or controls

- Compliance gaps → emerging AI regulations that teams haven't accounted for

- Lack of transparency → automated decisions that no one can explain

- Unclear accountability → when AI produces incorrect outcomes, who owns the correction?

Before scaling AI, organizations need clear governance. AI governance refers to the policies, processes, and accountability structures that control how AI tools are selected, deployed, monitored, and audited across an organization.

Establish governance before scaling

Effective AI governance includes:

- Approval processes before new tools are deployed

- Human review requirements for high-impact decisions

- Documentation of how AI systems are used in each workflow

- Regular audits of automated outcomes and data sources

Maintain visibility across AI-integrated workflows

Transparency becomes critical when AI is embedded in daily processes. Bitrix24 supports this with centralized reporting that tracks tasks, approvals, and project progress, so when AI-generated outputs move through defined workflows, it's clear who reviewed what and when.

Challenge 4: Preventing AI tool overload

When departments adopt AI tools independently, organizations end up with a fragmented technology landscape. Marketing uses one platform, sales another, support a third. And none of them connect.

What fragmentation actually costs:

- Workflows disconnect across teams

- Data scatters across platforms with no single source of truth

- Collaboration suffers when departments can't see each other's work

- Leaders lose visibility into whether AI is delivering results or just adding complexity

Centralize the operating environment

The solution isn't to restrict experimentation; it's to channel it through a shared system. When teams operate inside a unified workspace, AI tools integrate into existing tasks, projects, and communication channels rather than operating in isolation.

This allows leaders to:

- Maintain visibility across departments

- Standardize workflows that include AI-assisted tasks

- Monitor performance and reduce unnecessary tool duplication

Challenge 5: Developing the new skills leaders need

Traditional management skills still matter, but leaders now need additional capabilities to guide teams that rely on both people and intelligent systems.

Develop AI literacy

Leaders don't need to become tech experts overnight, but they should understand:

- Basic capabilities and limitations of AI tools their teams use

- How to evaluate new technologies and ask informed questions about data quality

- Where human oversight remains essential and where automation is safe to scale

Lead organizational change

Introducing AI requires teams to adjust how they work. Change management becomes essential:

- Redesign workflows around automation rather than bolting AI onto existing processes

- Support employees through capability development

- Bridge collaboration between technical and non-technical teams

Bitrix24 supports these transitions with project management tools that give leaders visibility across priorities as processes evolve.

Focus on strategy, not supervision

AI can automate routine tasks that once required constant oversight. This creates an opportunity for leaders to shift from tracking individual activities to guiding long-term goals. When workflows are visible and organized, leadership becomes about aligning people, processes, and technology — not monitoring who's online.

Top tip: Treat AI like a new team member, not a tool

AI changes how work gets done, not just how fast it gets done. The shift is in delegation, oversight, and accountability. If you wouldn’t assign a task to a junior hire without review, don’t let AI run it unchecked either.

When these challenges look different

Not every organization faces all five challenges equally. Context shapes which ones demand attention first:

- Companies just getting started with AI. If your organization has tested a few tools but hasn't embedded AI into core workflows yet, governance frameworks and fragmentation aren't your immediate problems. Focus on building trust (Challenge 2) and developing leadership skills (Challenge 5) first — the organizational muscle matters more than the tools at this stage.

- Highly regulated industries (healthcare, finance, legal). Challenge 3 (ethical use and governance) isn't optional; it's a compliance requirement with real legal exposure. Governance must precede deployment, not follow it, and may require legal review of every AI tool before it touches customer or patient data.

- Mid-size companies without a dedicated AI or technology strategy role. Many companies between 100–500 employees are adopting AI without anyone explicitly owning the coordination. Nobody reports on what tools are in use, nobody evaluates whether they overlap, and the CEO hears about AI initiatives secondhand. In these organizations, Challenge 4 (fragmentation) tends to hit hardest: assign ownership before the tool count gets unmanageable.

- Companies where AI adoption is driven by individual employees, not leadership. In many organizations, the first AI adopters aren't executives; they're individual contributors using ChatGPT, Copilot, or Midjourney in their daily work without formal approval. Leadership's challenge isn't driving adoption, it's catching up to what's already happening and wrapping governance around tools that are already embedded in workflows.

AI adoption doesn’t fail because teams lack tools. It fails when leadership doesn’t align priorities with how those tools are actually being used.

Leadership will determine AI success

The companies that win are not the ones using the most AI tools. They are the ones that decide how those tools are used, who owns the outcomes, and where accountability sits.

Right now, is your AI strategy something you’re leading, or something your teams are piecing together on their own?

Start for free with Bitrix24 to turn scattered experiments into structured workflows you can actually see, manage, and scale.

Steer Your AI Success

With Bitrix24, align your AI tools, teams, and tasks seamlessly. Transform adhoc experiments into scalable workflows. Lead, don't follow, your AI strategy.

Start TodayFrequently asked questions

How do I introduce AI tools without creating employee anxiety?

Explain the purpose before introducing the tool. Frame AI as a support system that handles repetitive tasks so the team can focus on higher-value work. Give employees access to training before deployment, involve them in pilot programs, and share results transparently. Anxiety usually comes from uncertainty, not from the technology itself.

What's the biggest mistake companies make when adopting AI?

Letting individual teams adopt AI tools without central coordination. This creates a fragmented landscape where data is scattered, workflows don't connect, and leadership has no visibility into what's being used. The fix isn't to slow adoption, it's to channel it through a shared system where every AI-assisted workflow is visible and trackable.

How do I create an AI governance policy?

Start with three elements: an approval process for new tools before deployment, a human review requirement for any AI output affecting high-stakes decisions (hiring, pricing, customer communications), and documentation standards for how AI is used in each workflow. Assign a governance owner and review quarterly as tools and regulations evolve.

Do leaders need technical AI skills?

Yes. Not deep technical skills, but they need AI literacy. This means understanding what tools can and can't do, knowing the right questions about data quality and algorithmic bias, and recognizing where human judgment remains essential. The goal isn't to build models but to evaluate investments and guide responsible use.

How do I measure whether AI is actually helping my team?

Track the same outcomes you'd track without AI (task completion rates, project timelines, quality, response times), and compare before and after. Avoid relying solely on adoption metrics like "number of employees using AI," which measure activity rather than impact. The most useful indicators connect AI usage to measurable business outcomes.